How to Test with Generative AI

Testing with Generative AI is here – let’s learn to work with it now

It’s no secret that AI, and in particular generative AI, has arrived in our field of software testing: in communication and our companies’ strategic plans.

Why this craze? Is it a new technological illusion? Is it a flash in the pan?

Of course, it is difficult to answer these questions definitively today. However, major generative AI models, both commercial and open-source, are already achieving impressive results in test design, implementation, and automation.

You might say that results with generative AI, for example, in test design or writing automation code, are far from perfect. But isn’t this also the case for us humans? Generative AI can be approximate, incomplete, or imprecise, and sometimes it can be excellent and complete, just like us!

Human and generative AI synergy for testing tasks

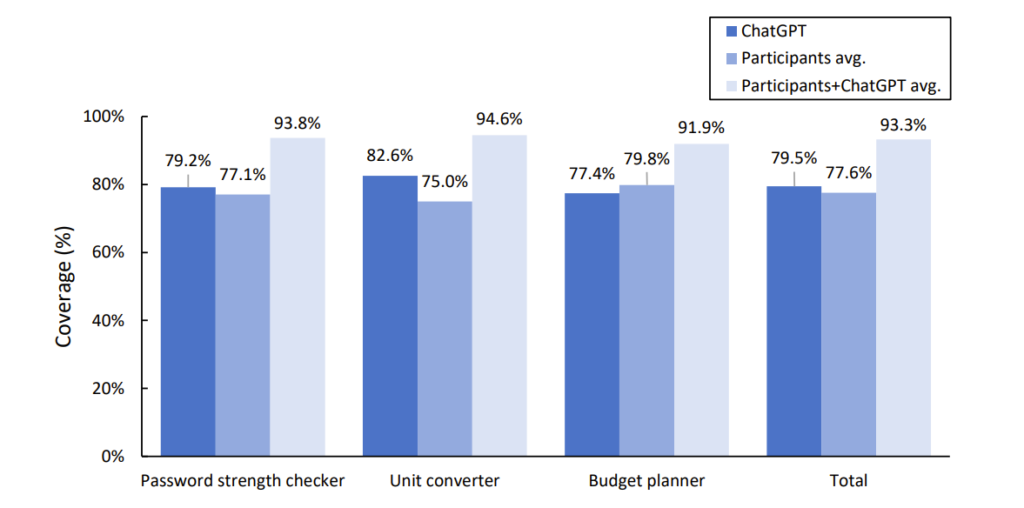

The following figure is taken from a January 2024 article. “ChatGPT and Human Synergy in Black-Box Testing: A Comparative Analysis“.

This article, written by researchers at NTT in Japan, explores the ability of ChatGPT (GPT-4) to generate black-box test cases compared with human testers. The experiment parallels the design of black-box test cases for three applications: on the one hand by ChatGPT, and on the other by four human testers.

The graph clearly shows that in this experiment, analyzing the coverage obtained compared to the authors’ defined completeness:

- AI sometimes outperforms humans (in average coverage obtained)

- AI is sometimes prone to error and confusion

- But, even more importantly, the combined Human & AI coverage achieves an excellent score on each of the three applications tested (> 90% coverage).

Of course, this experiment is limited; these three applications are a small sample and would need to be reproduced. But isn’t it indicating the road ahead: integrating AIs as our test assistants while retaining our responsibility? AI assists us daily, but we make the decisions to ensure the test tasks are correctly carried out.

Mastering the use of generative AI for testing: urgent need for training

The maturing of generative AI and its availability to accelerate testing tasks opens up new perspectives, but also challenges and issues to consider.

The first challenge is to master its use, define best practices, and integrate it optimally into our testing processes. There’s only one answer: we all need to be trained, and organizations need to train their test teams collectively.

It’s hard to imagine being able to master this technology without acquiring the knowledge and new skills that its use will entail. In particular, this means :

- Get hands-on experience in how to use generative AI (querying, reasoning, results evaluation) for testing, and acquire this new skill;

- To know, in practice, the risks and generative AI applied to testing. For example typical AI errors, energy consumption data, and possible biases in the results, and to know how to guard against these risks as far as possible:

- Master the integration of generative AI applications into test processes to achieve the expected results.

There are many resources available on-line, and some also in the form of training with a trainer. At Smartesting, we offer a two-day, 14-hour hands-on training. “Accelerating Test Processes with Generative AI,” offers a comprehensive understanding of leveraging generative AI in software testing. Participants will gain hands-on experience in prompt engineering, utilizing AI for test design, optimization, automation, and maintenance.

The course emphasizes practical application, with two-thirds dedicated to hands-on exercises using real-world case studies. A key highlight is exploring risk mitigation strategies for AI in testing, including hallucinations, bias detection, and cybersecurity concerns. The training incorporates the latest advancements and LLMs like GPT-4, Anthropic Claude-3, Mistral, and LLaMa, providing participants with cutting-edge knowledge. Ultimately, attendees will leave equipped to effectively integrate generative AI into their testing processes, optimize efficiency, and enhance software quality.

In conclusion, testing with Gen AI is here. Good news or bad news?

It’s partly up to us. As with any disruptive innovation, we have leeway to ensure that we don’t suffer but rather benefit from the new situation. One of these levers is technical, methodological, or organizational expertise in generative AI for software testing.

By developing our skills for the efficient integration of generative AI, and being aware of the associated risks, we will promote a gradual mastery at the service of teams and individuals. This proactive approach to generative AI is certainly preferable to a position of fear, unrealistic refusal, and ultimately a lack of mastery of the transformation that is coming to our software testing profession.