Generating regression tests on the fly: what for?

Published on 16.11.23

Introduction

We’ve been talking about AI for a few years now, but since the publication of ChatGPT, it’s now seen as an accessible Eldorado!

As far as I’m concerned, AI remains a tool, and the most important thing is not to know whether it’s AI, but to understand its potential uses. What does AI allow me to do better than before? What does AI allow me to do that I couldn’t do before, and that brings me value?

AI has many uses, such as prioritizing and selecting tests for regression campaigns. These uses, based on Risk-Based Testing, aim to reduce the scope of testing by limiting the increase in risk as much as possible. Another use of AI in testing is to generate regression test campaigns based directly on the way the software is used.

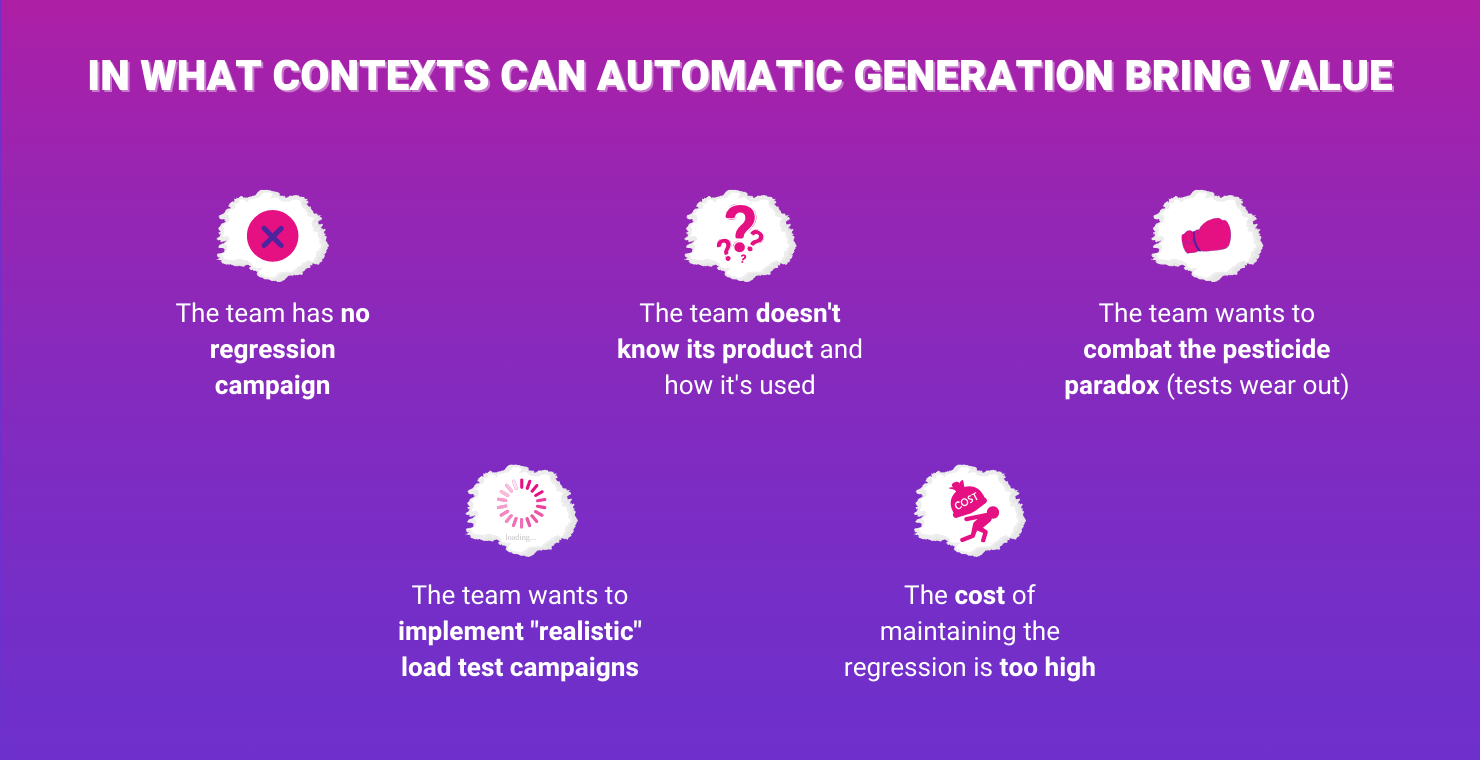

This sounds good, but in what contexts can automatic generation bring value? In this article, I’m going to suggest some contexts in which the creation of a regression campaign by AI brings value.

The team has no regression tests campaign

This is the most obvious case. Having a ready-made regression campaign when you currently don’t have one, or it’s no longer up to date, provides a basis for checking the application’s behavior. In this case, generating this campaign is an excellent start. It gives you something concrete and prevents you from getting lost.

On the other hand, this campaign, based solely on usage, remains incomplete. The team will also need to think about implementing some tests, such as negative tests or tests linked to security.

The team doesn’t know their product and how it’s used

It’s a recurring problem! The team develops a product, but doesn’t know in concrete terms how it is used. In the end, the tests are not necessarily well-targeted and the proposed changes are not as valuable as expected.

This can lead to the absence-of-defects fallacy and unnecessary work being carried out.

Generating a campaign based on usage enables you to find out in concrete terms how users use the product. So you can target your tests and upgrades more effectively.

However, you need to be very careful to generate this campaign over a period that is representative of how the product is used.

The team wants to combat the pesticide paradox (tests wear out)

Here we’re dealing with a fairly substantial regression campaign with updated tests, but on an ‘old’ product. The tests no longer detect anomalies, and failures are multiplying in production.

Rethinking the entire campaign, and identifying the tests that need to be changed, deleted, or added, requires a great deal of manual effort. It can therefore be worthwhile regenerating a regression campaign quickly and completing it with essential tests from the historical campaign.

As indicated, it is necessary to complete the generated campaign. Indeed, the regression must not only cover potential failures in common use, but also those in rarer use that are potentially malicious or simply problematic.

The cost of maintaining the regression tests is too high

The maintenance of regression tests (often automated) is a genuine concern. Many articles give good practices for limiting this maintenance. Other articles explain how to limit the number of tests to maintain sustainable maintenance.

In this case, generating regression tests on the fly means that only a few tests (those deemed to be the most important) can be maintained, and the campaign can be completed with tests generated on the fly.

In this exercise, you need to pay particular attention to the tests that are retained and continue to develop them.

The team wants to implement “realistic” load test campaigns

When we carry out load-testing campaigns, we try to simulate a flow of use generated by a certain number of users.

Having tests linked to usage means that you can use test paths that are close to what users actually do. This allows simulating these loads in a more representative way.

In this case, it is important to weigh the occurrence of the tests generated concerning the frequency observed.

Generating regression tests on the fly: Conclusion

To use AI or not to use AI, I’d like to say that that’s not the question. The real question is: what do I need, and how can I meet that need?

It’s true that AI opens up many possibilities and speeds up many tasks. In this article, I’ve given a few examples of contexts in which the use case of regression test generation has interesting potential, and there are others!

It is also important to be careful about the consequences and limits of your choices. AI, like any other technology, is not magic. It has its share of limitations and problems. We need to be aware of these so that we can adapt our practices and uses if we want to get the best out of it.